The Transformer Architecture in AI: Explained with Examples & Flowcharts

Introduction

The Transformer architecture in AI has completely reshaped how machines understand language and patterns. Introduced in the groundbreaking paper Attention Is All You Need by Vaswani et al. in 2017, this deep learning model has outperformed traditional models like RNNs and LSTMs — and for good reason. But what makes the Transformer architecture in AI so revolutionary? Let’s break it down.

Boilerplate Code: Definition, Examples, and Best Practices

What is the Transformer Architecture in AI?

The Transformer is a neural network architecture that relies entirely on self-attention mechanisms to process input sequences. Unlike traditional models that process data sequentially, Transformers analyze entire input sequences simultaneously, leading to faster and more efficient computations.

Key Components of the Transformer

1. Transformer Architecture in AI Overview (Flowchart)

The following flowchart represents the Transformer model’s architecture, showing the flow of data from input to output:

[Input Sequence] → [Embedding + Positional Encoding] → [Encoder Layer] →

[Multi-Head Self-Attention] → [Feed-Forward Network] → [Decoder Layer] → [Final Output]

2. Self-Attention Mechanism (Flowchart)

Self-attention assigns importance to different words in a sequence. The following flowchart illustrates how it works:

[Input Sequence] → [Query, Key, Value Matrices] → [Dot Product of Q and K] →

[Softmax (Attention Weights)] → [Weighted Sum of Values] → [Output Sequence]

3. Encoder-Decoder Process (Flowchart)

This chart explains how input is encoded and decoded:

[Input Text] → [Encoder] → [Latent Representation] → [Decoder] → [Final Output]

4. Encoder-Decoder Structure

The Transformer model consists of two main components:

- Encoder: Processes the input sequence and extracts key features.

- Decoder: Uses encoded information to generate output sequences, often used in machine translation tasks.

5. Self-Attention Mechanism

Self-attention allows the model to weigh the importance of different words in a sequence. For example, in a sentence like “The cat sat on the mat,” the word “cat” might have a stronger connection to “sat” than to “mat.”

6. Positional Encoding

Since Transformers do not process data sequentially like RNNs, they need positional encodings to retain order information in sequences.

7. Multi-Head Attention

Instead of using a single self-attention mechanism, Transformers use multiple attention heads to capture different aspects of the input data simultaneously.

8. Feed-Forward Neural Networks

Each attention layer is followed by a fully connected feed-forward network to enhance feature extraction and transformation.

9. Layer Normalization and Residual Connections

These techniques help stabilize training, improve gradient flow, and speed up convergence.

Advantages of the Transformer Architecture in AI

- Parallel Processing: Unlike RNNs, Transformers process entire sequences at once, significantly reducing training time.

- Better Long-Range Dependencies: Traditional models struggle with long sentences, but self-attention allows Transformers to handle long-range dependencies efficiently.

- Scalability: Transformers can be scaled up into larger models like GPT-4 and BERT, making them powerful tools for AI research and applications.

Applications of Transformers in AI

1. Natural Language Processing (NLP)

- Machine Translation: Models like Google’s T5 and Facebook’s M2M-100 use Transformers for multi-language translation.

- Text Summarization: BART and PEGASUS models generate concise and meaningful summaries.

- Sentiment Analysis: BERT and RoBERTa classify emotions and sentiments in texts.

2. Computer Vision

- Vision Transformers (ViTs): Used for image recognition, ViTs have achieved performance comparable to convolutional neural networks (CNNs). (Read More)

3. Speech Recognition

- Models like Whisper by OpenAI use Transformer-based architectures for converting speech to text with high accuracy.

4. Code Generation

- OpenAI Codex and GitHub Copilot leverage Transformer models to generate code from natural language prompts.

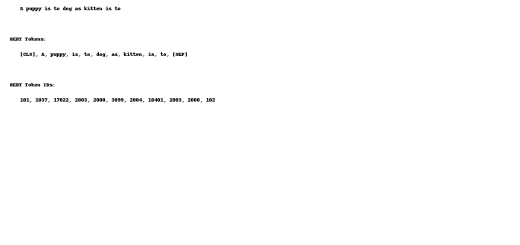

Real-World Example: GPT and BERT

Two of the most well-known Transformer-based models are:

- GPT (Generative Pre-trained Transformer): Used for text generation, dialogue systems, and creative writing tasks.

- BERT (Bidirectional Encoder Representations from Transformers): Used for search engines, sentiment analysis, and NLP tasks that require deep contextual understanding.

Challenges and Limitations of Transformers

Despite their advantages, Transformers also have challenges:

- High Computational Cost: Requires significant GPU resources for training.

- Data-Hungry: Needs large datasets for effective performance.

- Interpretability Issues: Hard to understand how decisions are made within deep layers.

Future of Transformer Models in AI

- Efficient Transformers: Research is ongoing to develop lightweight Transformers for mobile and edge computing.

- Hybrid Models: Combining CNNs, RNNs, and Transformers for enhanced AI applications.

- Ethical AI: Ensuring bias-free and responsible AI models in decision-making systems.

Conclusion – Transformer Architecture in AI

The Transformer architecture has transformed AI and NLP, enabling groundbreaking innovations like ChatGPT, BERT, and ViTs. With continued research, Transformers will further shape the future of AI, making it more efficient, accessible, and powerful.

FAQs – Transformer Architecture in AI

1. What makes Transformers different from RNNs and LSTMs?

Unlike RNNs and LSTMs, Transformers process entire input sequences at once using self-attention, making them faster and better at handling long-range dependencies.

2. Why are Transformers used in NLP?

Transformers provide superior performance in tasks like translation, summarization, and question-answering due to their ability to process context effectively.

3. Can Transformers be used for image processing?

Yes! Vision Transformers (ViTs) apply Transformer principles to image recognition, achieving results comparable to CNNs.

4. Are Transformers only useful for large-scale AI models?

While they excel in large models, research is ongoing to make smaller, efficient Transformers for broader applications.

5. What is the future of Transformers in AI?

The future includes more efficient, scalable, and ethical Transformer models for diverse applications beyond NLP, including robotics and healthcare.

Recent Comments